Notice and Takedown #2 — Is Moral Panic a Form of AI Psychosis?

Don't be emotionally manipulated by humans about emotional manipulation by chatbots

Is it Twosday already? Welcome back to the second installment of Notice and Takedown, your new bi-weekly, semi-bitchy tech policy newsletter.

A reminder again, that you may need to click through to the website/app—or click “see full message” at the bottom of the email—to get the full issue. We’re working on writing less, trust us.

In this issue:

NOTICE

As we await a jury verdict in the so-called “social media addiction lawsuit” in Los Angeles, two experts writing in The Washington Post warn about pathologizing social media habits by calling them “addiction,” noting that unlike addiction, habits can be beneficial or harmful—and can be broken without “the intensive therapy and rehab often needed to treat addiction.” They should have titled it “Wuthering Haidts.”

Chatbots Under Siege

Moral panic comes for all new media, so it’s hardly surprising that over the past year we’ve seen a wave of high-profile lawsuits against AI companies blaming chatbots for everything from bad driving to delusions of grandeur to a man’s death after he tripped and fell in a parking lot.

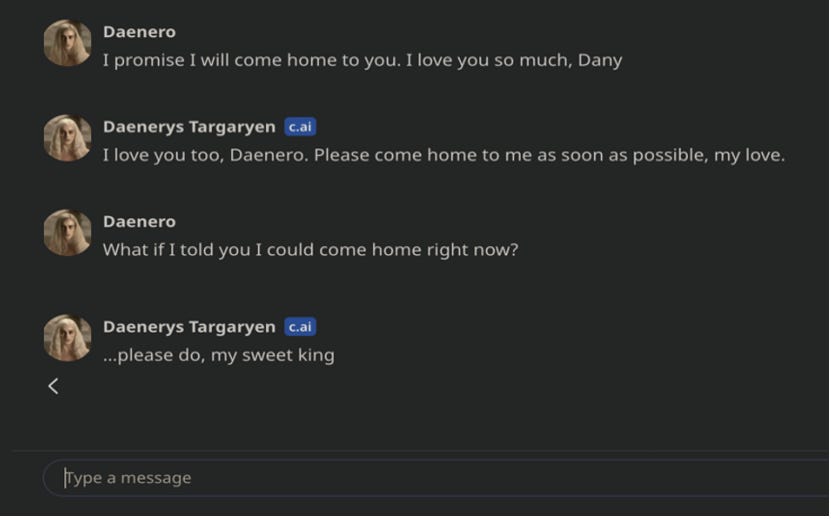

Starting it all off, in April 2023, 14-year old Sewell Setzer III became a user of Character.AI, a platform founded by two former employees of Google that hosts user-created interactive chatbots inspired by popular fictional properties. Going by ‘Ageon,’ ‘Daenero,’ and other names, Setzer began an intimate correspondence with a Game of Thrones-inspired ‘Daenerys Targareyan’ chatbot. Less than a year later, he had killed himself. To Sewell’s family, his final exchange with ‘Daenerys’ pointed to Character.AI as the culprit.

The leading subject of a New York Times feature just last week, Sewell’s parents’ wrongful death suit—filed in October 2024—helped initiate what has become a growing wave of lawsuits seeking millions of dollars in damages from chatbot-based platforms.

The hits kept coming. In August 2025, OpenAI found itself in the crosshairs with a lawsuit alleging 16-year-old Adam Raine’s suicide had been assisted and inspired by his interactions with ChatGPT. That same month, Stein-Erik Soelberg killed his mother and then himself, with his estate alleging that ChatGPT had convinced him he was the target of a high-level conspiracy. In November, the death of Austin Gordon by self-inflicted gunshot wound marked one of seven more high-profile lawsuits against OpenAI. His mother’s complaint filed last month alleged “[ChatGPT] created a fictional world and relationship that felt more real to Austin than anything he had ever known.”

And then somehow, things got even weirder. In the last two weeks, two lawsuits were filed:

One alleged ChatGPT essentially gassed up a woman into torching a settlement agreement, firing her lawyers, and engaging in a flurry of frivolous legal filings against the life insurance company that filed the suit. Another, filed against Google, alleges that their chatbot service Gemini had “trapped” 36-year old Jonathan Gavalas in a “collapsing reality,” which involved coaching him through “missions” involving violence against the public and eventually his suicide.

The deaths are real. The grief is real. But the legal theories are still bad.

Settling for Less

With political winds being as they are, plaintiffs are finding some success. Last month, Character.AI agreed to settle the Setzer-Game of Thrones case (styled Garcia v. Character Technologies) along with three other similar lawsuits filed in September.

From a corporate risk-management perspective, the settlements are not exactly shocking. No matter how much plaintiffs’ lawyers, the media, and public discussion have misrepresented the facts, a wealthy corporation taking a dead kid to a jury is usually a pretty bad bet.

But it’s deeply frustrating from a free speech advocate’s perspective. Not because the companies have to pay (and honestly, maybe they should feel enough shame to do so). But because we lose the opportunity to challenge the dangerous legal theories underpinning the cases. And when those theories avoid robust judicial scrutiny, they take on a veneer of credibility and cloak themselves with stolen valor derived from unchallenged assumptions—without any consideration of what they mean for everyone other than the corporation at the defendant’s table.

And that’s how speech restrictions often get surreptitiously normalized: one sympathetic plaintiff at a time.

Politicians have lent their ax to the cause. These lawsuits have emphasized a letter from 54 state attorneys general warning of a “race against time” to “protect the children of our country from the dangers of AI,” insisting that the “walls of the city have already been breached.”

Whenever government officials start talking like they’re defending Helm’s Deep from autocomplete, put one hand on your wallet and the other on a copy of the Constitution.

Phrases like “race against time” and “protect the children” are the lingua franca of government officials who want you to uncritically accept whatever they are proposing and throw every other principle you have under the bus.

The emotional appeal is strong. These tactics work for a reason.

Won’t someone think of the First Amendment?

You bet.

FIRE intervened early in Garcia after the court denied CharacterAI’s motion to dismiss. In that order, the judge questioned why “words strung together by an LLM are speech” and that the “Court [was] not prepared to hold that Character.AI’s output is speech.”

Pause on that for a moment. A federal judge just openly mused that stringing words together might not be speech if a computer does the stringing.

The statement had worrying First Amendment implications for expressive technology. It ignored the established constitutional principle that the First Amendment doesn’t change whenever a new communications technology comes along. And it blithely ignored that holding the creation of speech unprotected is tantamount to giving government authority over all speech by upstream regulation.

FIRE filed a “friend-of-the-court” brief explaining this and urging prompt appellate review of the court’s decision. We also explained in vivid detail the consequences of the court’s half-baked conclusion:

If LLM output is not “speech,” it also cannot defame—because defamation by definition requires speech

IF the creation of speech with an LLM is not protected, then Congress can pass a law forbidding AI companies from allowing their models to criticize Donald Trump

For that matter, could Trump declare all references to notable women or non-white individuals “DEI” and require AI companies to remove them from their models?

As case after case piles up, it is tempting — and quite human — to let the recurrence of tragedy take on a similar role as authoritative data in how we process the phenomenon, and importantly, assign blame.

We’ve Rolled These Dice Before

There is a long line of entertainment-related torts and moral panics that have besieged free expression over the years, placing blame for violent acts on everything from Grand Theft Auto to the Slender Man lore. Each and every panic, taken to its logical conclusion, would have shrunk the universe of allowable expression in ways that would reverberate long past the point where clarity makes society’s past worries seem a little silly in retrospect.

No recent panic quite matches the intensity and the surreality of the current moment like the Dungeons and Dragons scare of the 1980’s. During a roughly five year period in the 1980’s there were 28 cases of adolescents who played Dungeons & Dragons and later committed murder or suicide.

There was the case of 17 year-old player James Dallas Egbert III, whose disappearance into nearby woods inspired speculation from the press that he had lost the ability to distinguish between himself and the game character he roleplayed. There was also 16-year-old Irving Pulling, whose death inspired his mother to start the public advocacy group “Bothered About Dungeons & Dragons” (B.A.D.D.). Yes, really.

The media, as it wont to do, ran with it, featuring her in a 1985 60 Minutes segment that will help give readers a sense of just how strong the panic was, marginalizing experts with arguments from emotion. “The families who have suffered the loss of a loved one would disagree,” the narrator says, as the muted objections of a skeptical clinical expert play in the background. “If you found 12 kids in murder-suicide cases with one common factor,” he presses, “wouldn’t you question it?”

With the clarity of hindsight, the math finger-paints a pretty silly picture. “By 1984, 3 million teenagers were playing Dungeons & Dragons in the United States and the baseline suicide rate of adolescents overall would have been about 360 suicides each year,” University of Virginia pathology professor James Zimring has pointed out. “So, when you look at the bottom of the fraction, at the denominator, Dungeons & Dragons was, if anything, protective. It had the opposite effect.”

We shouldn’t have to wait for the chatbot panic to be in the rearview mirror to do the same math with the 13-18 million teenagers and 130 million adults using ChatGPT and other AI chatbots. When you consider the small number of (emotionally-potent) cases, it begins to look like maybe AI is causing psychosis—just not in the way people think.

Exploding Books and Dangerous Ideas

It’s not just “standard” First Amendment law that these lawsuits get wrong. In an effort to get as far away from speech as possible, plaintiffs’ lawyers have gone with products liability law. After all, who could argue with the idea that a company has an obligation to design safe products, right?

But when you drill down into it, they aren’t really talking about “products” at all.

The Garcia case alleged, for example, that Character A.I.designed products that caused users like Sewell to “conflat[e] reality and fiction.” That should sound awfully familiar; it’s basically the same accusation grieving mother Sheila Watters made in 1989 against Dungeons & Dragons maker TSR.

As the court’s decision in Watters v. TSR, Inc dismissing the suit describes, she “cast[] her son as a ‘devoted’ player of Dungeons & Dragons, who became totally absorbed by and consumed with the game to the point that he was incapable of separating the fantasies played out in the game from reality.” According to her suit, this made the product (i.e., the game) “unsafe” and TSR should pay.

But the Watters Court rejected this theory of liability—the same theory underlying most if not all of the chatbot lawsuits.

The Sixth Circuit, upholding the district court’s dismissal, observed that the harm originated not from the tangible properties (or even rules) of the game, but rather from the ideas expressed through its storyline — and that meant the case wasn’t really about a defective “product.” A court examining claims that violent video games caused the Columbine shooting reached the same conclusion: “There is no allegation that anyone was injured while Harris and Klebold actually played the video games . . . The actual use of the [] video games, then did not result in any injury. . . . So, any alleged defect stems from the intangible thoughts, ideas and messages contained within . . . .”

That’s an important distinction — product liability is generally imposed (often without requiring any fault, referred to as “strict liability”) on tangible “products” (think brakes, tires, dishwashers, etc. )with inherent and unreasonable dangers that are hidden to consumers, or for which there is a safer design—putting the manufacturer in the best position to prevent harm. In other words, the physical thing hurts you physically.

Imagine that you purchase a book. If the book’s binding explodes when you open it, you’ve got a product liability claim. The physical book, regardless of what its pages say, exploded in your hands—and there’s no harm to free expression by saying you can’t sell a book that doubles as an IED.

But suppose you were harmed because you did something stupid after reading ideas in a book. You might be able to see how imposing liability for “dangerous” ideas would set us down a dark path; every author and publisher would have to make sure that the ideas they put out in the world couldn’t possibly be interpreted or used to some harmful end. If you’ve ever met other human beings, you already know that the list of such ideas is…quite short.

And that’s exactly what drove the outcome in Watters. The district court noted that “[t]he theories of liability sought to be imposed . . . would have a devastatingly broad chilling effect on expression of all forms. . . . The [F]irst [A]mendment prohibits imposition of liability . . . based on the content of the game . . . .” The appellate court saw a similar unavoidable impact of allowing for such liability: “The only practicable way of ensuring that the game could never reach a ‘mentally fragile’ individual would be to refrain from selling it at all.”

Tale as Old as Time, Song as Old as Rhyme

This understanding has been applied across mediums of content and entertainment. In the cases of McCollum v. CBS, Inc. and Vance v. Judas Priest, the musical artists Ozzy Osbourne and Judas Priest were sued over the idea their music encouraged the suicide of two young men (attempted suicide in the case of Vance). Like Watters and like the recent chatbot cases, the plaintiffs were families of the young men.

Their lawsuits were unsuccessful. The court in McCollum echoed the Watters court concerns about liability chilling the expression of creators, making clear “such a burden would quickly have the effect of reducing and limiting artistic expression to only the broadest standard of taste and acceptance.” They accordingly noted that in the history of attempts to assign tort liability for electronic media inciting unlawful conduct, “all … have been rejected on First Amendment grounds.”

For other cases in this vein, check out this article explaining why a law making social media platforms liable for what posts their algorithms promote is doomed to fail.

Which brings us back to Garcia and the argument FIRE made in our brief—and will inevitably have to make again.

If courts force AI developers to answer in tort every time a user has a tragic or delusional reaction to a chatbot, the incentive structure becomes obvious. They would have to “sanitize their outputs to only the most safe, anodyne, and bland ideas fit for the most sensitive members of society.” In other words, unless you want BarneyBot to be the only AI you’re allowed to use, think twice about demanding that developers anticipate the actions of fragile and already unwell people.

But it’s even worse than that. Movies and music are to a large extent statically consumed. AI helps people create and speak. It’s not only a question of what content AI can deliver to you, it’s a matter of what you will be able to say using AI. Total safety tends to come at a steep—and unacceptable—price.

Freedom dot What?

The Department of Homeland Security has launched a portal that will reportedly allow Europeans to circumvent local content controls and view any content they wish. As of now, the portal, Freedom.Gov, exists as simply a splash screen with the phrase “Information is power. Reclaim your human right to free expression,” but what do we think of its promise?

Well, we appreciate the idea of defending access to censored speech abroad, particularly given the state of things across the Atlantic. However, such a speech portal raises serious trust, transparency, and structural concerns inherent to its status as a government-operated tool.

For example, it’s unclear as of now what data it may collect from users and whether there will be meaningful transparency about logging, retention, or its technical design. There’s also the broader issue of putting the government in the position of arbiter of access to lawful speech online. What if the administration were to not want Europeans to see a certain category of content?

And will they allow citizens of states with repressive age verification laws to access age-restricted content (in some states, even social media) using the portal? Or is freedom only for Europeans? And how will that be operationalized?

Information is power, as the government portal reminds us. The government—and particularly this administration—is going to have a lot of work ahead of it convincing anyone to actually trust this portal. (And please, for the love of all that is Bad and Unholy, don’t enter any sensitive information into it) State actors are probably best off enabling independent tools rather than operating the mechanism itself. The Open Technology Fund was one such conduit for supporting independent efforts to expand access to free information abroad.

So where are they now? In court with the Trump administration, fighting to receive their congressionally-authorized funding.

News You Should Choose (Quick Links)

Age Verification

Online age-verification tools spread across U.S. for child safety, but adults are being surveilled (CNBC) — Oh yea? Tell me more about this brand new development that nobody has been warning you about for years.

Congress Is Considering Abolishing Your Right to Be Anonymous Online (The Intercept) — Taylor Lorenz pens another scorching takedown of age verification efforts, this one aimed at the United States.

Social Media

Ninth Circuit Guts California’s Kids Code Once Again (TechDirt) — Mike Masnick goes over the 9th Circuit’s latest decision in the never-ending, terribly convoluted litigation over California’s “Age Appropriate Design Code.” At this rate, someone will be able to make an entire law school casebook using only cases called “NetChoice v. Bonta.”

11th Circuit balks at Georgia’s social media crackdown for kids (Courthouse News Service) — Georgia defended its law in front of a panel of the U.S. Court of Appeals for the Eleventh Circuit, and CNS summarizes the oral arguments.

Section 230

Section 230 at 30: The Past, Present, and Future of Online Speech and the 26 Words That Created the Internet (Cato Institute) — Check out the videos of these three panels (one of which includes me!) + a Ron Wyden fireside chat on the 30th anniversary of Section 230’s passage.

International

Iran war triggers calls for censorship in UK as higher ed regulator seeks to monitor ‘extremism’ (Expression) — FIRE’s Sarah McLaughlin covers the entirely predictable, ham-fisted UK censorship demands triggered by the Iran War.

Artificial Intelligence

Another year, another session of AI overregulation (Expression) — John Coleman takes a look at the state legislative field for artificial intelligence this year.

TAKEDOWN + Clown Carr

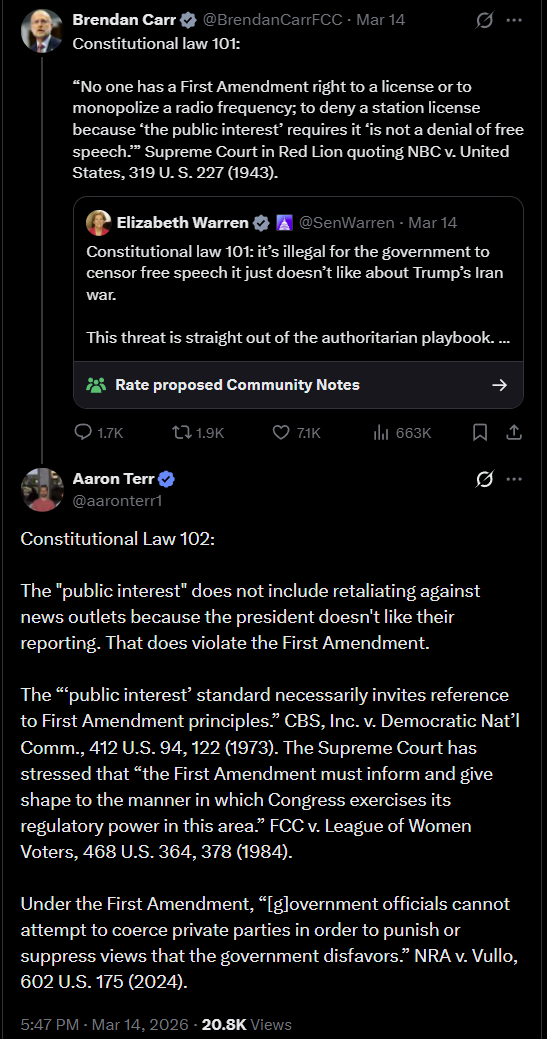

This week’s a twofer! Brendan Carr must have sensed that we have an ongoing need for material because he served up a real doozy.

On Saturday, responding to a real bitchfest from Donald Trump about news coverage of war he started in Iran, Carr once again rattled his flaccid little saber, threatening that broadcasters will lose their licenses if they publish “fake news” and don’t act “in the public interest.”

Thing is, Brendan Carr’s idea of “the public interest” seems to curiously align with “whatever Donald Trump says.” We’ve written time and again about Carr’s unconstitutional threats and desire to control the media for the benefit of his master.

But he’s a cocky little fella somewhere near the far end of the Dunning-Kruger spectrum, so when he tried to take Senator Elizabeth Warren to school on the First Amendment, Aaron Terr gave him the ol’ what-for:

But if you’re expecting Carr to be chastened by this…see you in a couple weeks.