Notice and Takedown #3 — Two Juries Walk Into the Plaintiffs’ Bar

The plaintiffs’ bar walks out with $381 million and our First Amendment rights

In this issue:

The Unconstitutional Design Features of Social Media Lawsuits

Last week, juries in two states delivered back-to-back verdicts against social media platforms for harms they allegedly caused to adolescent users. The lawsuits are different in form (New Mexico brought its lawsuit under consumer protection laws, while the private plaintiff in California brought product liability claims), but they share a common framing: “We’re not imposing liability for the content on the platforms, we’re attacking the ‘design features’ of the platforms themselves.”

It’s easy to understand the motivation for that framing. Admitting Bad Content caused the alleged harms runs the lawsuits directly into Section 230, which protects platforms from being liable as the publisher of third-party content. And it would pose serious First Amendment issues to boot, because the vast majority of that content is constitutionally protected.

So the lawyers busted out that “one neat trick” of calling content something else and hoped the subterfuge would conceal their true target. Their argument is that users are harmed not by any content they encounter on the platforms, but rather by “design features” of the platforms themselves — like infinite scroll, autoplaying videos, and content recommendation algorithms — which are allegedly “addictive.” Those features, the lawyers allege, are designed to keep users engaged and active on the platforms to the detriment of their health and wellbeing.

That’s troubling in its own right. Even taken at face value, the plaintiffs in these suits want to punish social media platforms for making consumption of protected expression too appealing and engaging. Imagine such a claim in any other context. If I wrote an insanely good choose-your-own-adventure book, written to hit all the right psychological triggers, with 200,000 possible story paths, would anyone seriously believe that I should face massive legal liability if a reader just could not put the book down? I think not.

But we shouldn’t take this framing at face value, because really it’s cynical, rhetorical sleight-of-hand. “It’s not speech it’s [some other thing we’re calling it]” is exactly what Texas argued when it defended its regulation of platforms’ content moderation practices in Moody v. NetChoice. The Supreme Court was…not impressed. As I wrote on Wednesday, “The First Amendment isn’t fooled by synonyms … the ways platforms arrange, display, and choose how users consume content are editorial choices that are protected by the First Amendment.”

Some would quibble with my book hypothetical on grounds that social media is different, because it is more interactive, and pushes content to users rather than being a passive source of expression. But it’s just the opposite. If anything, the effect of increasing interactivity and engagement actually demonstrates the expressiveness of those editorial choices. And as if to prove there is truly nothing new under the sun: We’ve been through this before.

Defending their violent video game regulations from First Amendment challenges, various governments argued that video games should be treated differently because they are interactive in a way that books and movies are not. In one such case before the Seventh Circuit, Judge Richard Posner made quick and eloquent work of that argument:

Maybe video games are different. They are, after all, interactive. But this point is superficial, in fact erroneous. All literature (here broadly defined to include movies, television, and the other photographic media, and popular as well as highbrow literature) is interactive; the better it is, the more interactive.

Speech isn’t any less protected because it is crafted to keep your attention. That’s the whole point of speech in the first place: to get people to listen to and engage with it. Recognition of the appeal, persuasiveness, and impact of expression — the things that make it effective in the first place — is the First Amendment’s raison d’être. It is no great leap to say that the plaintiffs’ arguments would create a First Amendment exception for speech that is too effective. That should terrify you, for reasons that I hope I do not have to explain.

And the rhetorical chicanery is just as obvious in its attempt to circumvent Section 230 by disclaiming that the liability is based on content. The distinction between “design features” and “content” is artificial and illusory, as I explained to the Massachusetts Supreme Court in November:

The Commonwealth’s argument presupposes the presence of third-party content that users find appealing or attractive. Were the only content on Instagram videos of beige paint drying, nobody would become addicted simply because Instagram notified users that another one posted then auto-played it in an infinitely scrolling feed. And, importantly, there would be no harm from the boring feed.

Similarly, if Instagram contained only the most deeply enriching, educational material, it is doubtful that—even if some youth engaged in compulsive use—the Commonwealth would file suit claiming that young users were spending an unhealthy amount of time learning on the platform.

Virtually every news article about these verdicts has referenced the thousands of cases waiting in the wings. And that’s precisely what Section 230 was meant to prevent: the existential threat of endless litigation that would make hosting user-generated content infeasible.

But why on Earth should you give a damn about whether these giant, unsympathetic tech companies have to face the music for zombifying the Youth of America?

I’m so glad you asked.

It’s exactly because what these lawsuits really target is speech. If social media platforms have to worry that they’ll be liable whenever someone uses the platform so much that the content they encounter causes mental health issues, the reasonable course of action is to make sure that their platform is less interesting, and purge any content that could plausibly be alleged to cause some kind of harm. That will have a direct, deleterious impact on what kinds of content you can consume, and what ideas you can express. (You might also find that platforms become unusable, as an entirely uncurated “firehose” feed will also be the safest bet. Nobody, and I mean nobody, wants a firehose feed.)

This is exactly why the courts have rejected attempts to impose this kind of liability against every other form of media.

Because proponents of these lawsuits like to compare Big Tech to Big Tobacco, let’s use cigarettes to illustrate (without explaining the blindingly obvious fact that cigarettes are not protected by the Constitution, while speech very much is).

When a human being smokes a cigarette, it has certain known, unavoidable, and relatively consistent physical impacts on the body. Speech is decidedly different: It impacts each person differently, based on personality, life experiences, and other factors unique to each person. A duty to protect against harms from speech is impossible to meet; the possibilities of how speech might cause harm to any person are limitless and unknowable. Courts have recognized that liability would cast an enormous chill over expression, limiting the universe of available content to only that which is suitable for the most sensitive and fragile individuals — a rather bleak and boring prospect that is incompatible with the First Amendment.

And the effects doesn’t stop at social media either. If a platform can be held liable when its delivery of content harms users, there is very little that prevents you from facing liability when your speech hits the wrong way — especially if you post somebody else’s content, because Section 230 protects that, too. The stakes here go far beyond the financial interests of big companies. They threaten to destabilize our system of free speech as we know it. (I strongly recommend reading Mike Masnick’s excellent rundown of the full range of implications over at Techdirt.)

Trial courts often do not like to dismiss lawsuits at an early stage. They, somewhat understandably, want to give people their day in court and allow them to mount a case whenever possible. Unfortunately, these two courts gave short shrift to critical issues that could upend the Internet and First Amendment doctrine. These jury verdicts are far from the last word, however. The appellate courts will have an opportunity to course correct, and hopefully they will reach the proper conclusion: These cases should have never gone to a jury in the first place.

Social media platforms have their problems, to be sure. But we’re not going to be better off with fewer places in which to speak our minds and the constant threat of liability for “harmful” speech limiting the ideas we can safely express.

NOTICE

Social media “addiction” has been a hot-button topic for a while now. But the verdicts last week, both of which centered in large part around the supposed addictiveness of platform design, have taken the discourse to a new level—especially when it comes to content recommendation algorithms and personalized feeds.

But looking at it from the opposite angle makes it even more obvious that specific content, not “addiction,” is really the harm these claims are aimed at.

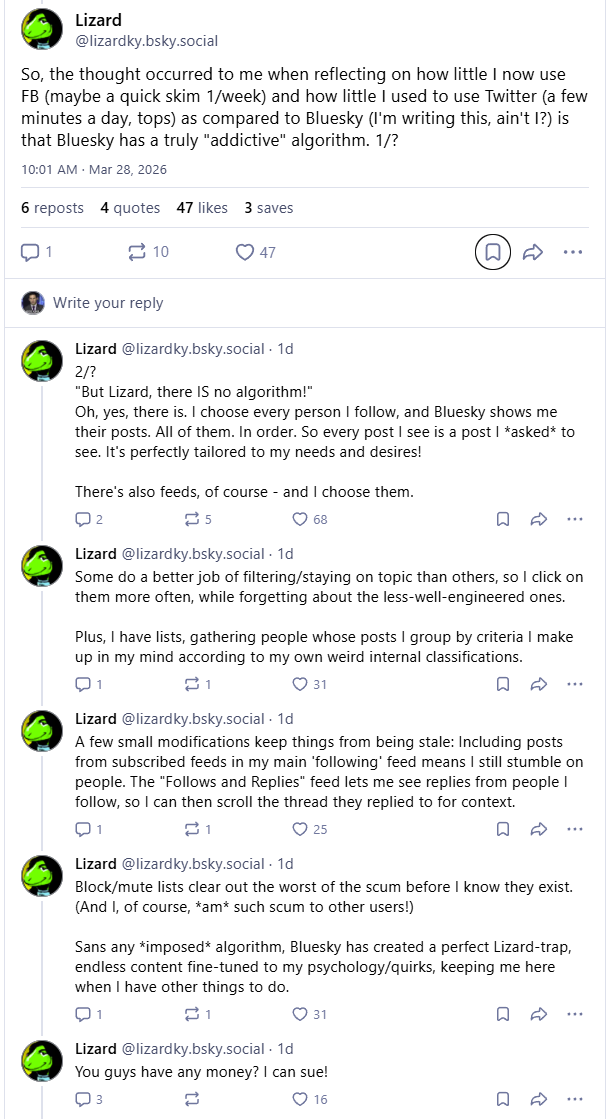

Bluesky user @lizardky.bsky.social wrote a thought-provoking series of posts (login required, but reproduced below) asking the question: Isn’t a system like Bluesky, where there is no content-recommendation algorithm and users can tailor their feeds to contain precisely the people and types of posts they want to see, even more addictive?

And if that is so, what’s the end-game for these lawsuits? If you get rid of the platform-imposed personalized feeds and that results in an even more relevant and engaging feed, you won’t have solved the purported problem. So it seems clear that the actual complaint is: the specific content being delivered by the content-recommendation algorithms is the source of the harm.

Oops!

The Rise and Folly of Joseph Gordon-Levitt

Remember how, last year, it seemed like Pedro Pascal was in every single movie? Well, in the year 2012, that was Joseph Gordon-Levitt. Fresh off his 500 Days of Summer and Inception success (true fans will reach deeper to Brick and Mysterious Skin), his name littered the posters in the cinema lobby — The Dark Knight Rises, Looper, Premium Rush (did anyone actually see that movie?), even Lincoln. The future looked like Joseph Gordon-Levitt.

At the same time this was happening, he was working to grow a collaborative media platform he had originally founded in 2006 called HitRecord, where users could create, record, and remix each other’s art. With its emphasis on community and connecting artists from across the globe, HitRecord was the platonic ideal of the internet, and it was an incredibly exciting project for young, highly amateur filmmakers (including me!) and artists at the time.

Most astonishingly, JGL’s highly successful acting career would soon take a backseat to HitRecord. As he took on fewer and fewer big screen projects, HitRecord went on to fuel an Emmy-winning TV show, the creative assets for multiple blockbuster video games, and has been said to have reached over 850,000 users. Now, the actor, who has come to see himself as something of a guiding shepherd for HitRecord’s tight-knit and dedicated community of creators, has taken on the role of an activist — championing the creative arts as embodied by HitRecord in a world of rapidly advancing technology. Presumably, he’d be a natural ally to our cause of free expression, right?

Sadly, not quite. At least not yet.

Gordon-Levitt’s foray into activism started in 2023, when he wrote an op-Eed in the Washington Post arguing artificial intelligence companies should pay creators “residuals” for the use of their art as training data. Launching a technology and politics-focused Substack last year, he’s since grown into an all-purpose campaigner for restrictions on expressive technology. And the Brick actor has been busy.

At the state level, he recently testified on behalf of Utah’s HB 286, an AI bill that requires developers of frontier models to implement “child protection plans” into their models. “These amoral AI businesses … have proven time and time again that they are incapable of prioritizing the well-being of kids,” said Gordon-Levitt in his testimony. At the national level, he joined Senator Dick Durbin at a Capitol Hill press conference to promote a bill which would sunset Section 230 and open up online platforms to a whirlwind of lawsuits.

The scope of his insight seemingly knows no bounds. On March 17th, the global community formalized his new role with an appointment as the United Nations’ first “Global Advocate for Human-centric Digital Governance.” The 10 Things I Hate About You actor is, once again, everywhere.

We’re not here to say stay in your lane, celebrity! As a bona fide tech entrepreneur, the Beverly Hills Cop: Axel F actor (last condescending epithet, we promise) has every right to opine on regulation affecting his company, and to chart a vision for the technology world he wants it to be a part of.

But JGL should know the vision he’s been looking to impose on the world of expressive technology will undermine the principles which underpin HitRecord’s success and everything good it represents about the Internet. Let’s look at HitRecord again.

The beauty of the platform lies in its seamlessness. It takes about 10 seconds to create an account, another 3 seconds to post content, and when you want to remix somebody else’s content, you’re instantaneously given a nice crisp .jpeg or .mov file to transform. Someone’s storyboard becomes someone else’s animation becomes someone else’s backdrop for a short film. The only substantive interruption in this otherwise frictionless process of collaboration and expression is checking a box that no copyrighted material was used in your uploads. (This doesn’t include HitRecord’s creations, which are all designed to be freely shared among the community.) Otherwise, the terms of the process are set by HitRecord’s users.

This hands-off approach is made possible by the U.S.’s First Amendment-informed approach to the Internet, and particularly Section 230’s liability shield, which places responsibility for any wrongdoing by users with the users themselves. It allows HitRecord to sit back and let the garden grow. Were the Section 230 sunset bill to pass, HitRecord would find itself thrust into a new kind of role with its community. Now legally responsible for the nature of each and every artistic exchange, some level of friction would be necessarily introduced into HitRecord’s freeflowing creative process so their lawyers would be prepared to show a court they have done their due diligence with regards to the potentiality of unlawful content. We’ve seen what form this due diligence takes in countries with very different legal systems from the U.S.: upload filters, age verification, automated enforcement.

To his credit, Gordon-Levitt has come to recognize some of these points, at least with respect to Section 230 and HitRecord. “A platform like HitRecord and so many others would have a lot of trouble existing without the protections afforded by Section 230,” he admitted in a March 14th interview. “I’ve decided not to support this particular bill moving forward.” We think this is an excellent development and it’s always a green flag when somebody can change their mind in response to new information, but he should understand this isn’t limited to Section 230.

Disruption of the Internet’s profound speech- and creativity-enabling characteristics is the inexorable result of the techlash policies to which he’s attached himself. They will be sacrificed on the altar of safetyism, in pursuit of a child-proofed Internet. Some readers may find these barriers a marginal irritation to them personally, no doubt. But in the aggregate, each and every one of these barriers will deter — or even prohibit — innumerable people from contributing to a vibrant and dynamic Internet. It can be easy to forget these effects when sprawling tech giants like Meta and Google hold the spotlight in the debate, but these restrictions are a betrayal of the spirit which has allowed HitRecord to thrive too.

The Clown Carr Unloads at CPAC

When we first had the idea for this recurring section, we figured that it would be fun to revisit every few issues when Brendan Carr gave us new material. Boy, did we get that cadence wrong. Every time it seems like there couldn’t possibly be any shamelessness or hypocrisy left in there, Carr pops his head out of the door you could have sworn you just saw him come out of.

The man is a veritable Endless Handkerchief Chain of unprincipled hackery.

Carr’s latest unfortunate occupance of our headspace comes in the form of a CPAC appearance that rounded out a cycle of gaslighting and criminality we know all too well. The cycle goes something like this: The Trump administration engages in seemingly (but not actually) lawful activities with ostensibly reasonable and particularized justifications. With Mr. Carr, this often takes the form of we’re just holding broadcasters to the public interest standard! We point out they are in service of a broader nefarious and unlawful end like, say, shutting down news coverage critical of Trump. Administration officials and allies then blast critics for being hysterical or not understanding what the administration is actually doing. Finally, at the end of the cycle, the administration, like the Scooby Doo villains they are, gleefully admits to the nefarious and unlawful intention to the applause of their devoted followers.

We saw Trump do this with a deep-fried celebratory graphic a couple weeks ago. Now, Carr just couldn’t resist but pat himself on the back for his part in their shared conspiracy against a free press. The only silver lining would be the look on Carr’s face if these remarks were ever to get played back to him in a court of law in a jawboning case, as they most certainly would.

News You Should Choose

Artificial Intelligence

Hegseth’s War On Anthropic Encounters The First Amendment (Techdirt) — Cathy Gellis gives a thorough summary of Judge Lin’s ruling that the Department of Defense likely violated the First Amendment when it petulantly and unlawfully designated Anthropic as a “supply chain risk” for pushing back against demands that it allow Claude to be used for domestic surveillance or autonomous weapons.

AI + 1A: Why the First Amendment Protects Artificial Intelligence (PDF) (TechFreedom) — My esteemed former TechFreedom colleague Corbin Barthold has an excellent new whitepaper out making the argument that AI outputs are protected by the First Amendment.

Age Verification

Users hate it, but age-check tech is coming. Here’s how it works. (Ars Technica) — A look at different attempts to solve the intractable privacy issues of age verification, and how they (don’t) work.

When age verification moves into your operating system (Proton) — There’s a new age verification trend: putting the onus on operating systems, even for your computer. The threats to privacy and freedom of expression intensify.

Copyright

Supreme Court Agrees With EFF: ISPs Don’t Have To Be Copyright Enforcers (Electronic Frontier Foundation) / ACLU Celebrates Supreme Court Decision Promoting Free Expression Online (ACLU) — Last week, the Supreme Court tossed a massive $1 billion verdict against Cox Communications, holding that ISPs cannot be held liable for failing to police copyright infringement by users. That liability would have posed massive consequences for online speech: ISPs would have been forced to shut off Internet access entirely for some people (and the people they live with who did nothing wrong).

Section 230

Congress considers blowing up internet law (The Verge) — A rundown of the Senate Commerce Committee’s March 18, 2026 hearing on Section 230 and the movement to destroy it.

What Does The Viral Afroman Trial Have to Do with Section 230? (Techdirt) — Kate Ruane, Director of the Free Expression Project at the Center for Democracy & Technolog,y explains that without Section 230, we would have been wrongly deprived of the opportunity to listen to Afroman’s songs mocking an unjustified police raid on his home. (They were going to make sure they had legitimate grounds for a warrant, but I’m guessing then they got high.)

Social Media Litigation

The Big Tech verdicts you’re cheering for are actually terrible for free speech (Expression) — My initial take in the immediate aftermath of the California social media addiction lawsuit verdict.

Everyone Cheering The Social Media Addiction Verdicts Against Meta Should Understand What They’re Actually Cheering For (Techdirt) — Mike Masnick does a terrific job of running through the list of terrible implications if the social media verdicts are allowed to stand.

International

UK tests out ‘digital curfews’ and considers a teen social media ban as Australia updates rules for its landmark policy (Expression) — FIRE’s Sarah McLaughlin explains that the UK, not content with only having a few terrible ideas about restricting online speech, is now considering “digital curfews” and teen social media bans.

Jawboners

A Litigation Playbook for Narrative Warfare (Lawfare) — Renee DiResta reviews the new book from Missouri Senator Eric Schmitt (former Missouri AG and architect of the Missouri v. Biden lawsuit) and finds that not only is it riddled with falsehoods, it also somewhat shockingly admits out loud what was obvious to most: the case was never about facts, law, or justice — it was just about narrative.

TAKEDOWN

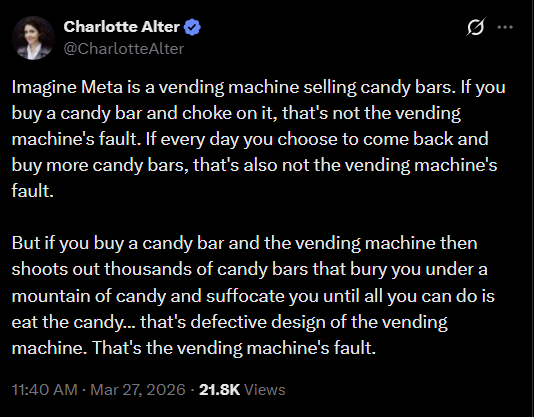

In the days following the California social media verdict, it felt like more than half the Internet was doing a coordinated, unannounced “wrong answers only” bit. And nobody was more committed to the bit than TIME correspondent and feminist thriller author Charlotte Alter. I guess if you want to be an optimist about it, her posts were some true works of fiction.

There was a bizarre analogy comparing a social media platform to…a vending machine that gives you free candy and also apparently defies the laws of physics?

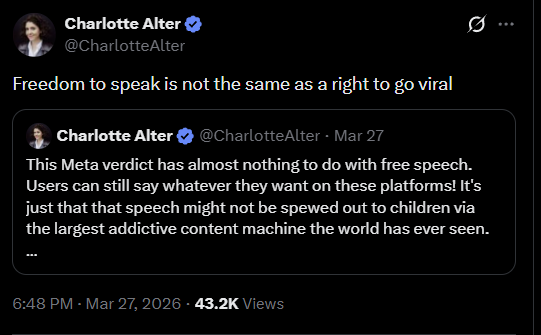

And then she tried her hand at First Amendment law, inventing a heretofore unknown doctrine that while the First Amendment protects your right to speak, it does not prohibit the government from limiting how many people can hear you speak:

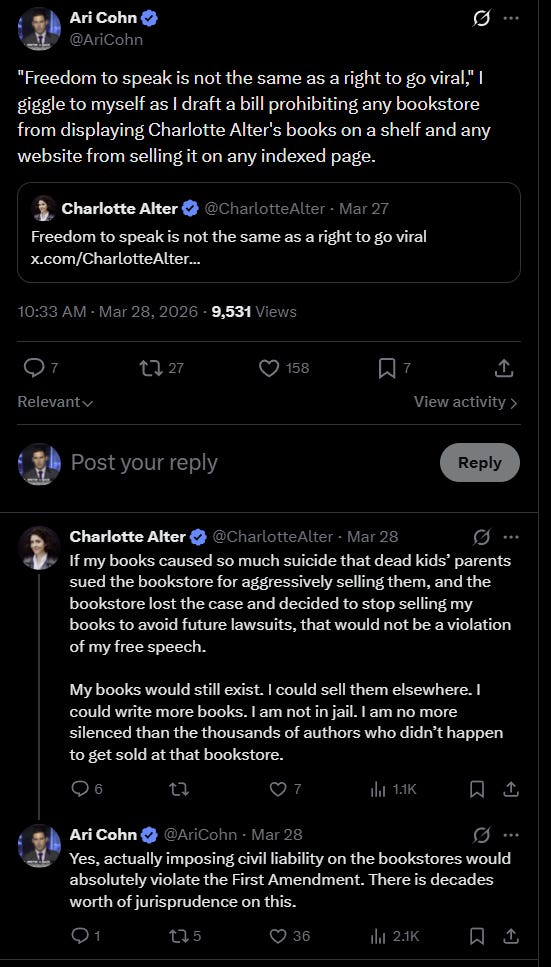

Then she really dug deep to get even wronger. When I pointed out how that makes absolutely no sense, she confidently (but wrongly) informed me it would not be a free speech issue at all if parents sued bookstores because her books were causing suicides, forcing them to stop selling the books.

If her books are half as bad as her free speech takes, we may yet have an opportunity to test her theory out.